In Part 1 and Part 2, I went through Huawei’s new compute architecture for AI (the Atlas 950 SuperPoD). But what I didn’t appreciate before the event was how much mobile networks are going to change with AI. And especially with agents.

The rise of agents was probably the biggest topic at the Mobile World Congress. And the development of agent-native networks is my third takeaway.

But first some fun stuff from the Mobile World Congress.

Qualcomm had a pretty cool exhibit. And it had a cool robot arm called the Dragonwing (cool name). It’s a massive circular screen on a robot arm. You can talk with it like an AI assistant. And it moves around and it shows you images while you talk. There’s something about the size of the Dragonwing that is both awesome and intimidating.

Here’s a photo.

Here are a couple of videos of it in action.

Ok. Back to the topic.

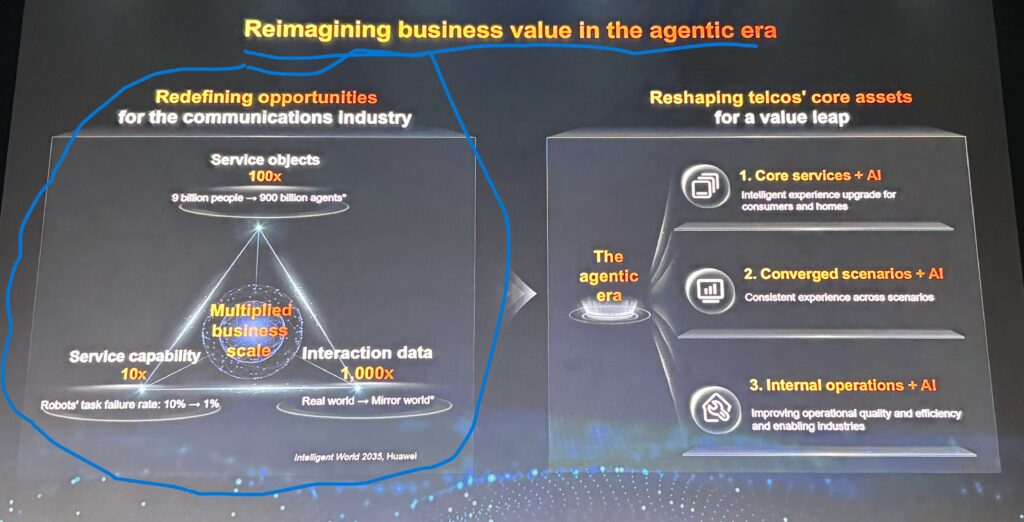

The 900 Billion Agent Network: Why Connectivity is No Longer Just for Humans

Mobile networks are built to enable the activity of +8B humans (and their businesses).

So, what happens when the network is now carrying the activity of +900B agents?

Yang (Eric) Yang, President of Huawei Carrier Business, argued we could very well be looking at networks in 2035 with the following activity:

- +900B agents using the network

- A 1,000x increase in interactions

- A 10x increase in services

Here is Eric and his slide about what a 2035 agent-native network could be like.

This information is from the Huawei Intelligent World 2035 report, which is definitely worth reading. It’s available here.

In the envisioned 2035 world, network requirements will be very different than today.

- Latency becomes very important. You can’t have cars driving and drones flying if there’s a lag.

- Upload speed is much more important. An AI Agent era will be a lot about sensing the physical world and what’s happening in real-time. That requires fast upload.

- Bandwidth requirements go way up. Agents (and AI) depend on real time context to understand situations and user intent. That means lots of real-time interactivity.

Think about how our current mobile networks help humans interact.

It is mostly episodic sharing information (mostly text and video) and enabling interactions. But agent-native networks will be about a constant sharing of real-time context so that AI and agents can function. It will a continuous flow of information.

Fang Xiang (Huawei President of Wireless Solutions Product Line) had a good summary of some of the more differences between traditional and agent-native networks.

- Information is getting more complex. Data moving in the network is going from single modal (text, image) to multi-modal.

- The network focus on download speed is shifting to download plus upload speed, with no latency.

- Traditional networks are about giving individual devices access to the network. Agent networks will be about enabling collaboration between devices.

- The network is going from handling discrete interactions with the network to continuous, real-time interactions.

- The current focus on local network optimization is shifting to global network optimization.

- The current focus on APIs is shifting to a focus on tokens and intent.

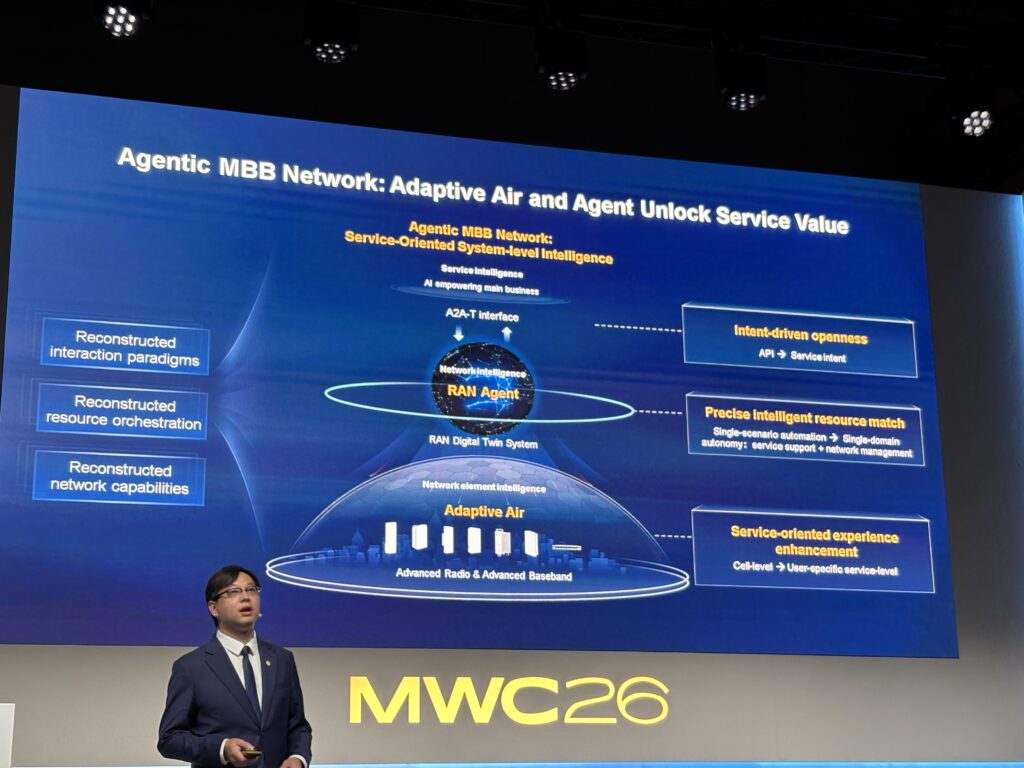

He has some really good points about how carriers can prepare for this by adding intelligence to their 5G and 5GA networks. Specially, they can deploy Radio Access Networks (RAN) Agents and the RAN digital twin systems. More on this below.

5G Was Not Born in the Age of AI. But 6G Will Be.

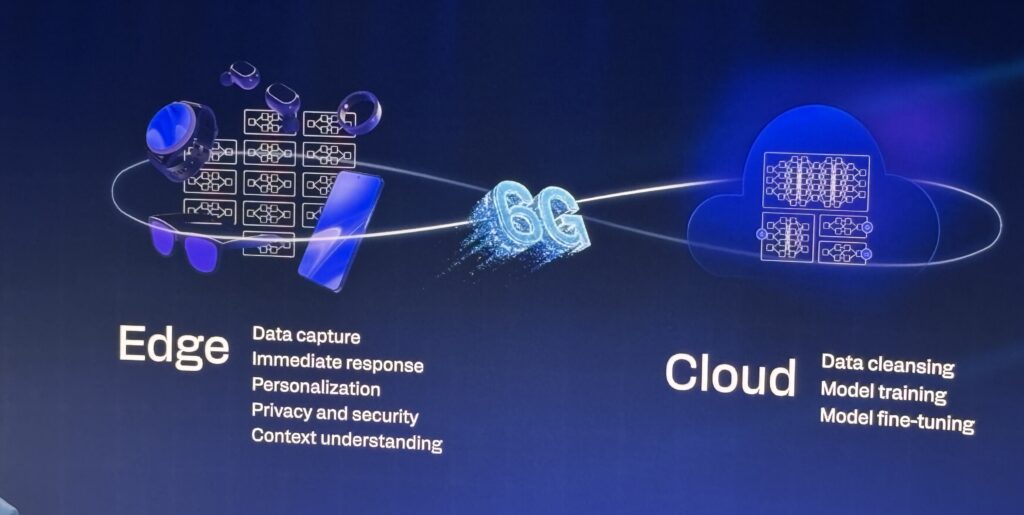

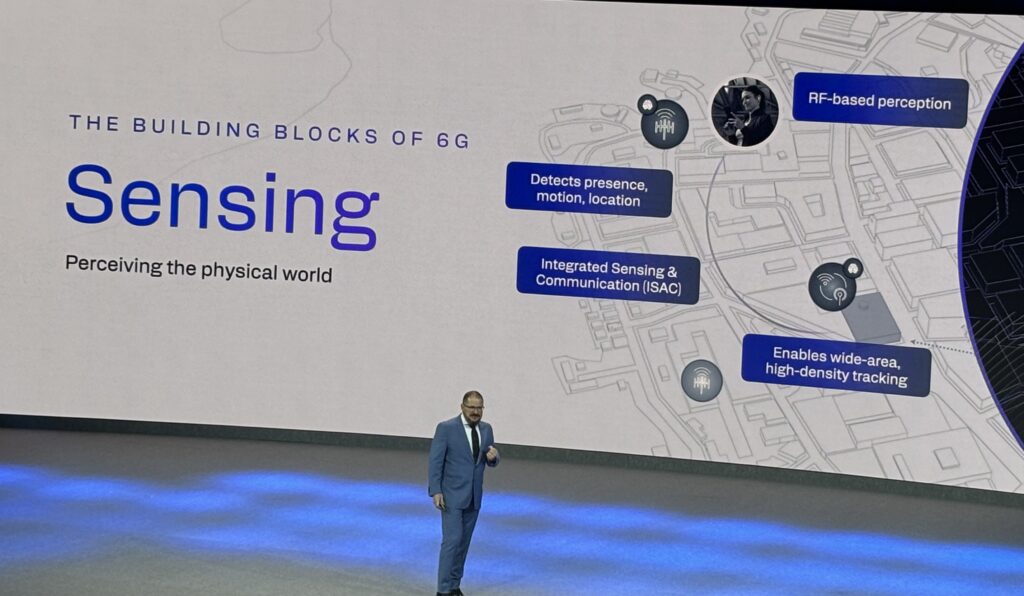

Cristiano Amon, the President of Qualcomm, have a good talk on the main GSMA stage. He had some good points about how these agent-native networks will emerge with 6G.

His argument was:

- Agents and AI are becoming the primary user interface.

- But agents require real-time context to function.

- Therefore, you need an agent-native network that operates seamlessly between the edge and the cloud. Edge devices will do the sensing and will constantly be gathering real-time information. Information will flow seamlessly and continuously between the edge and the cloud.

- Data will increasingly be about intent. Agents have to know what you are doing and want to do.

Below are some slides from his talk.

He was mostly talking about how 6G is central to an agent-native network. And on a network with hundreds of millions of agents, there will be 3 new needs:

- New connectivity needs. As discussed. He described network connectivity as continuous context and token exchange.

- New compute needs. Not just the big data centers discussed. But in every aspect of the network. Intelligence and compute needs to be built into edge devices, RAN, edge data centers and centralized data centers. See slide below.

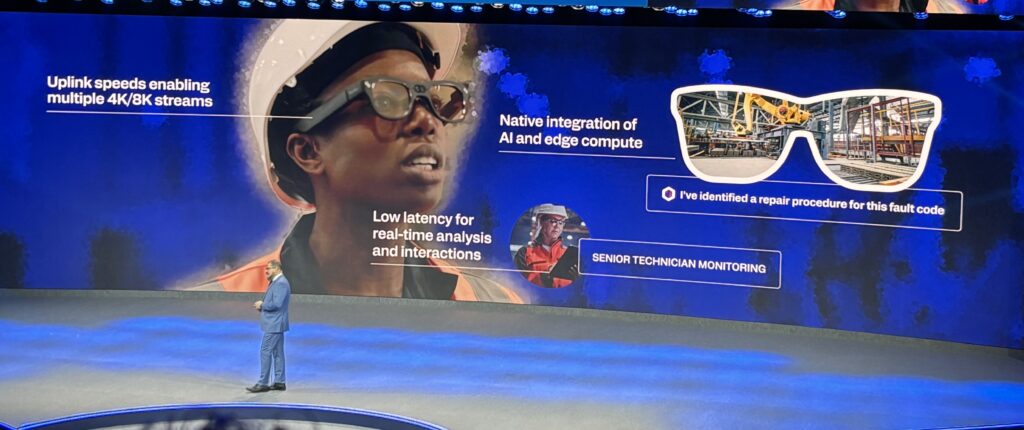

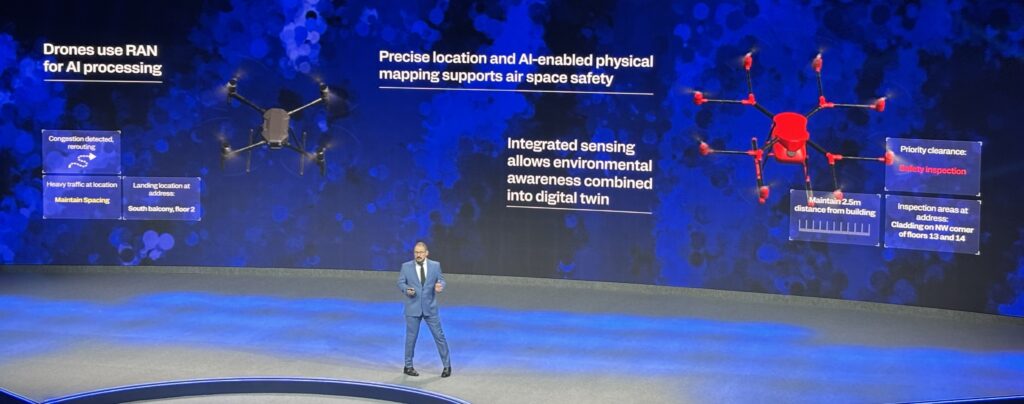

- New sensing capabilities. This is really a new capability. The network and all its connected devices need to be constantly sensing the environment. That’s everything from smart glasses to drones flying over the city. See slides.

Here are the smart glasses example, which would be part of a sensing network. For ICT clients, they could be customers or employees.

The drones will likely do a scary amount of sensing (i.e., surveillance).

That was a pretty good talk. And Qualcomm is really focused on 6G.

But he was followed by Yang Chaobin (CEO of ICT for Huawei), who I think really surpassed him.

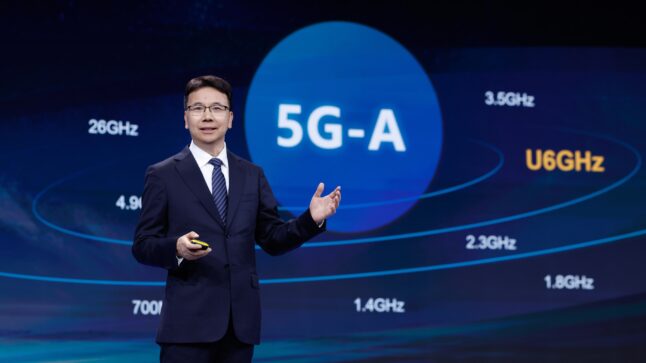

The Case for 5G-Advanced: Closing the AI Gap Before 6G Arrives

Yang Chaobin shifted the conversation from theory to how to actually build an agent and AI-native network today. That’s great.

Chaobin argued that AI-native networks really need three things.

- Multimodal AI interactions

- Real-time AI coordination

- Cloud-based AI agents

From the press release for his talk:

- “Networks must move away from being downlink-centric and deliver ultra-high bandwidth both uplink and downlink to support multimodal data exchanges between devices and clouds for AI.”

- “…networks must provide secure, reliable, and ultra-low-latency connectivity to support real-time AI collaboration and intelligent decision-making.”

And he cited uplink speed and bandwidth as the biggest bottleneck to doing this today. The current 60ms uplink latency is a problem.

And he argued that 6G is not going to solve this anytime soon (my words, not his). He mentioned that standardization for 6G is underway, but the standards are not expected to be set before March 2029 (according to 3GPP).

Waiting 5 years is bad strategy. The solution is to extend 5GA and upgrade it for the new AI activity.

5GA is a half-generation step between 5G and 6G that can deliver “10 times higher uplink speeds, superior AI service experience, new IoT technologies like reduced capability (RedCap) and passive IoT, and AI for differentiated network capabilities.”

And Huawei is already at commercial scale in 5GA in 270 cities in +30 provinces. Globally, there are already 70 million 5G-A users. That’s the way forward in 2026.

From the press release:

“After multiple rounds of discussion at the World Radiocommunication Conference (WRC), U6 GHz has been established as a mainstream frequency band for future mobile communications. 5G-A already supports U6 GHz, and mainstream device chips and the industry chain for 5G-A devices are also mature. This means 5G-A is ready for large-scale commercial use.”

His main point was that the path forward for intelligent networks is:

- Upgrade and expand 5GA. Don’t wait for 6G.

- Free up spectrum.

Which brings me back to RAN Agents. This came up quite a bit at the conference as a next step for mobile carriers to improve their performance with AI.

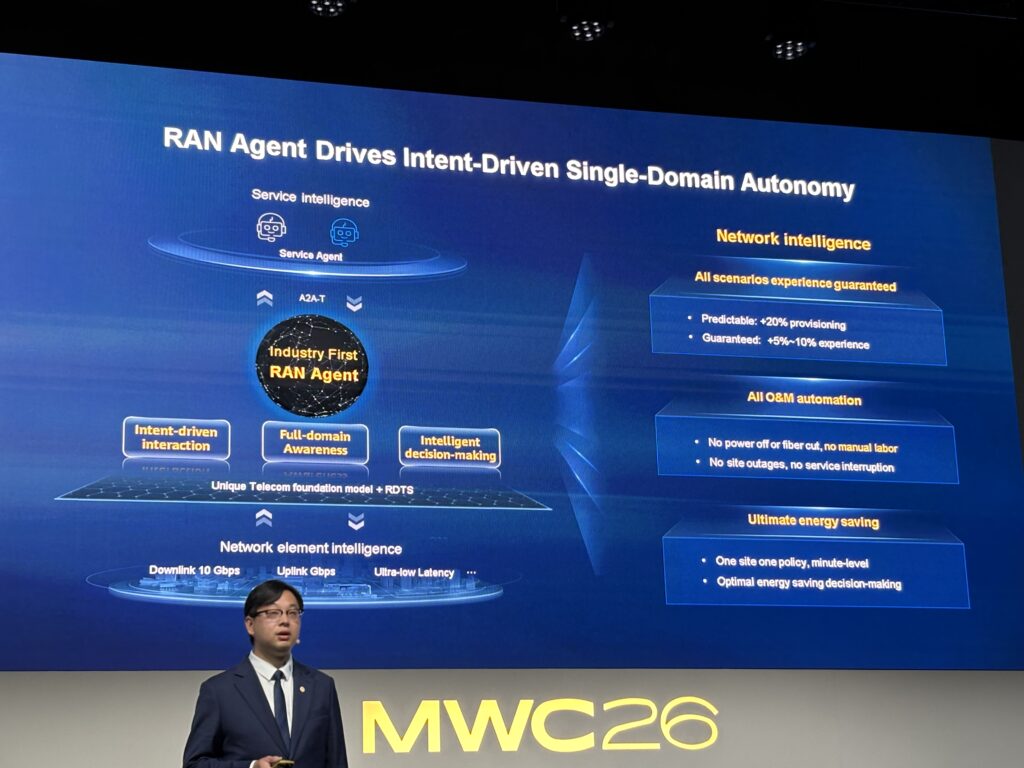

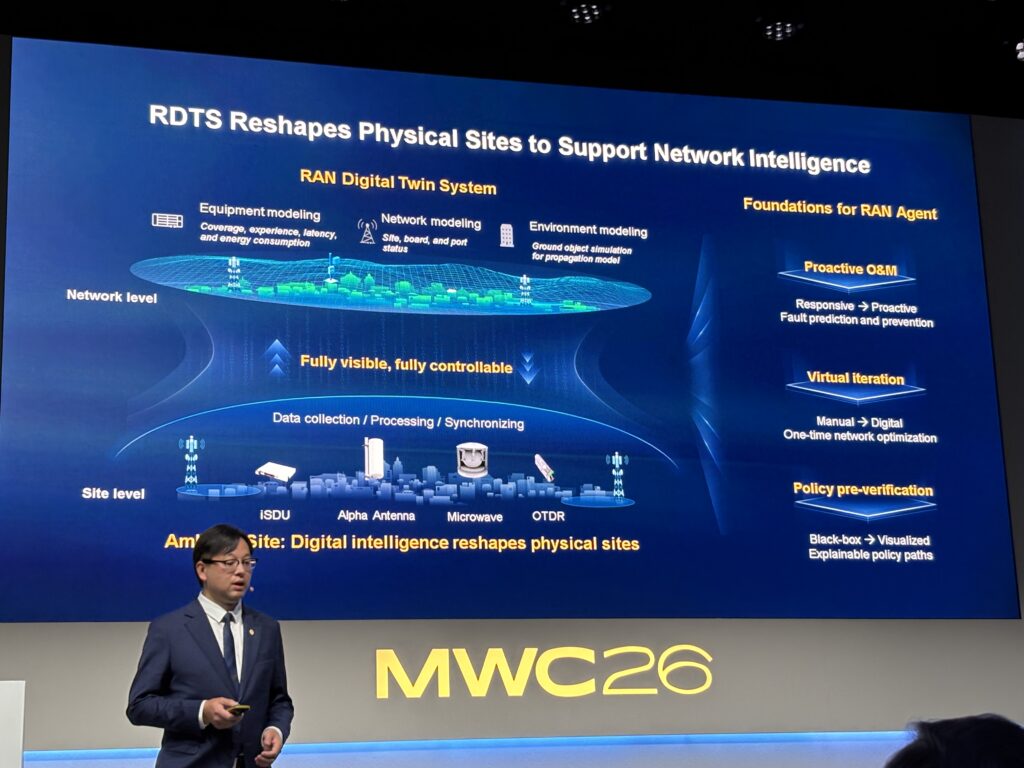

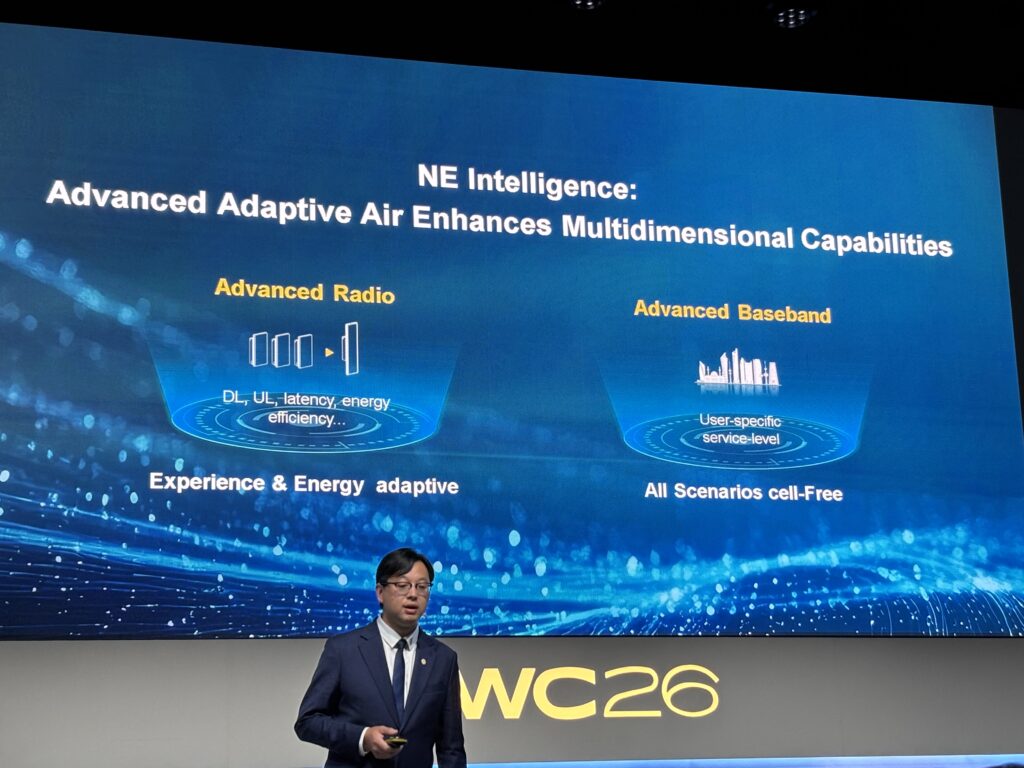

Huawei proposed the idea of RAN (radio access networks) agents in 2024 at the Huawei Analyst Summit. The idea is to add intelligence to existing 5G and 5GA mobile networks. And Huawei’s RAN Intelligent Agents are a suite of AI components for carriers to do that.

It includes:

- A telecom foundation model

- RAN Digital Twins System (RDTS)

- Intelligent computing power.

In a network, this enables intent-driven network management, real-time network prediction, multi-objective optimization, and self-learning.

Here’s the announcement if you want to know more about this.

Here are some slides from Fang Xiang’s talk on this if you’re curious. Otherwise skip to the next section.

***

Ok. That’s most of what I wanted to cover. A pretty important topic I think.

In the Part 4, I shift to business use cases.

-Jeff

And here is some more fun stuff from the conference.

Xiaomi had a big exhibit and they were showing off their supercar, which I didn’t know about. It’s called the Vision. Pretty awesome.

——–

Related articles:

- The Winners and Losers in ChatGPT (Tech Strategy – Daily Article)

- Why ChatGPT and Generative AI Are a Mortal Threat to Disney, Netflix and Most Hollywood Studios (Tech Strategy – Podcast 150)

From the Concept Library, concepts for this article are:

- AI Cloud

- Generative AI and Agents

- AI Infrastructure and Data Centers

- Agentic-native networks

From the Company Library, companies for this article are:

- Huawei

———