Mobile World Congress 2026 just finished up in Barcelona. It was awesome.

- Lots of big announcements by cool companies.

- Lots of good food. Barcelona is so much fun.

- Lots of fun stuff. Such as Alibaba trolling Meta with their new Qwen smart glasses. Alibaba put up their booth right next to Meta, where it really overshadows it. And they put up ads with a guy that looks a lot like Mark Zuckerburg.

Here are the actual glasses.

I’m putting out several articles with my takeaways from the event.

But the big topic across all of them is agents.

That is what virtually every business was focusing on. It’s what the carriers present were really focusing on.

What will mobile networks look like in a world of +8B humans and +800B agents?

I’ll write a lot about agents in the next articles.

But my first takeaway from the event is about the rapid advances happening in AI compute infrastructure.

Beyond the Chip: The Shift to Massively Integrated AI Compute

Those of you who read me regularly know I’ve been on the topic of AI data centers for about a year. GenAI just has a different type of compute at the core. Which requires a new tech stack.

I recently did a 5-part article series on this new AI infrastructure. I kind of went crazy on this topic.

And that turned into a discussion about how AI data centers are different in China.

Which turned into a discussion about Huawei.

Because Huawei is really the only global player that is building semiconductors, data centers, mobile networks and edge devices (including smartphones and EVs). They are building the entire end-to-end architecture.

Within this, Huawei’s CloudMatrix 384 has been the big topic. In 2025, this was their new AI data center architecture. And this is top tier performance compute. It is for hyperscalers and other frontier-level AI models. It is 384 of Huawei’s Ascend 910C NPUs. Plus, some super-fast connectivity and lots of power.

Here’s what the 384 looks like:

So, I was pretty excited when Huawei announced at MWC2026 that they were releasing a big upgrade to their AI compute and data centers. That’s the Atlas 950 SuperPoD.

Huawei’s Big Announcement Was the Atlas 950 SuperPoD, Its New AI Computing Architecture

Here’s a photo of the new Atlas 950 SuperPoD from the Huawei exhibit at MWC. I’ve had to blur out the technical information. But I’m adding it to my collection of photos with big servers. I think I might be the only person who really likes taking pictures with server racks. I have a whole series.

Seaway Zhang (President of Huawei Computing Line) said the shift from AI to agents could require:

- A 10-100x increase in computing

- A 5-10x increase in memory. That means KVCache is a big challenge.

- Latency below 20 ms.

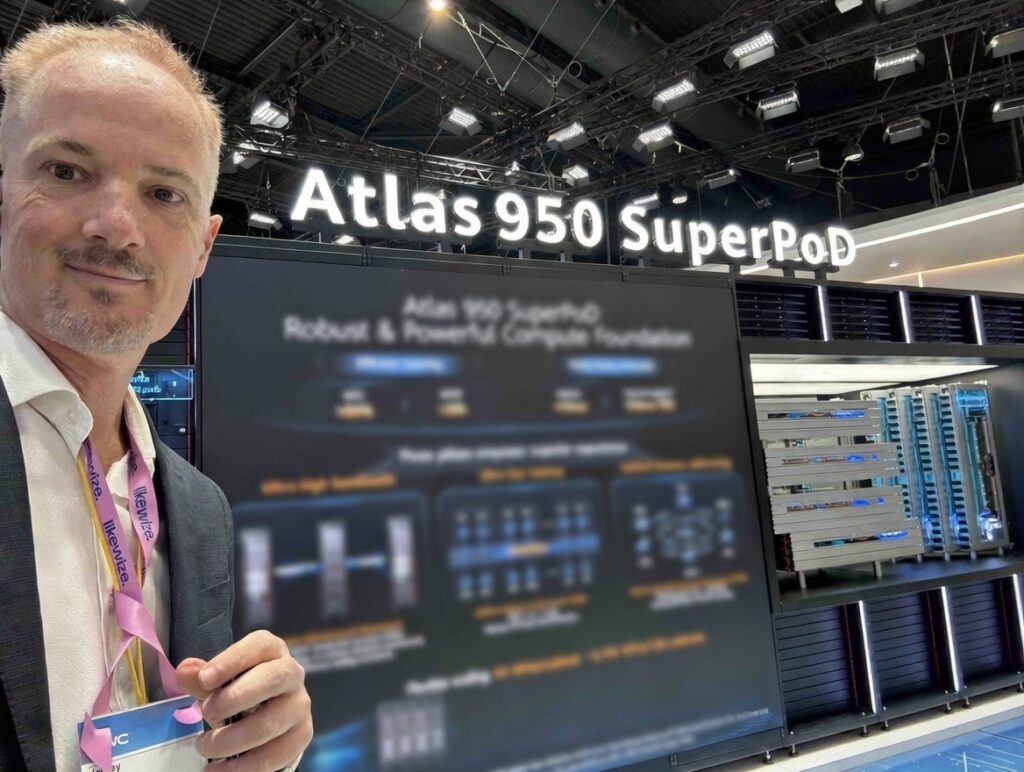

Here’s a slide from Seaway Zhang’s talk.

And the main selling points of the new Atlas SuperPoD are:

- High performance inference and training.

- Ultra-high bandwidth.

- Ultra-low latency. From 40ms to 10ms.

- Unified memory addressing.

- Flexible scaling.

So, you can see how it’s being positioned. Training is going to have to become much faster and more stable. Inference is going to need to be able to handle the big swings in usage.

Here’s a slide from Seaway Zhang’s talk on the Atlas 950.

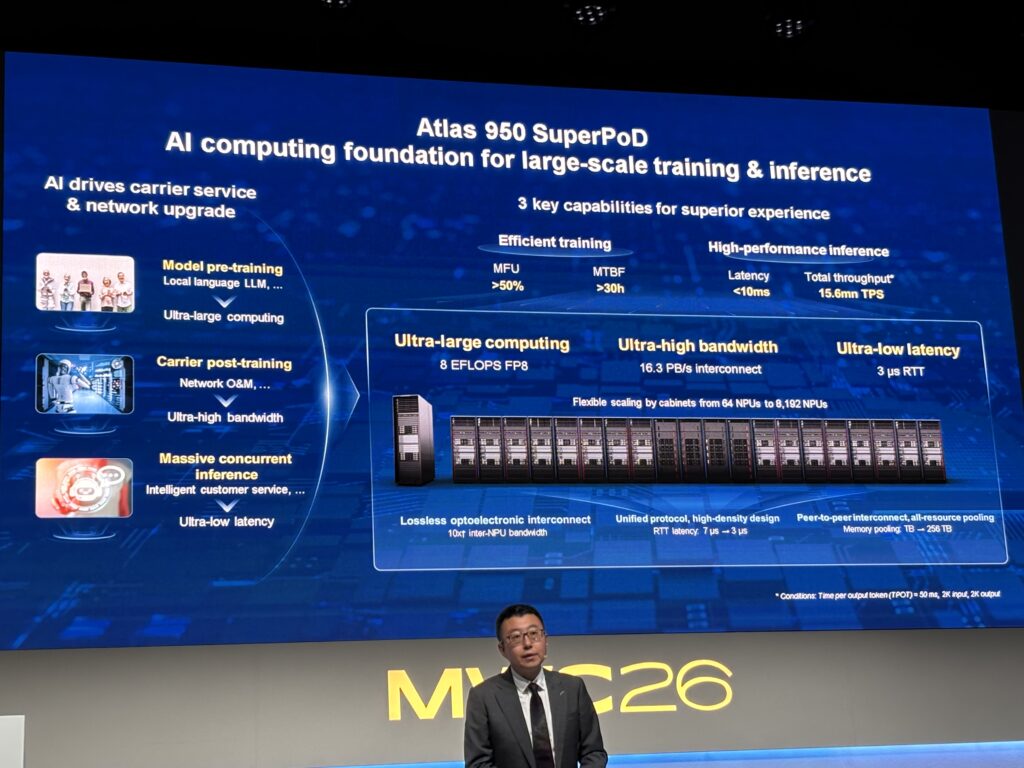

Finally, a tip of the hat to the Huawei event people. Huawei is really good at exhibitions in general. They put out a ton of information about their products on super dense slides, which is really helpful for a guy like me.

But at MWC2026, they really went to the next level. Huawei had, by far, the largest exhibition at the event. Ericsson and Nokia were tiny by comparison. Here’s their exhibition, which took up like 70% of one of the main halls.

Brute Force at Scale: The Atlas 950 SuperPoD Has 8,192 NPUs

AI data centers are not really data centers. They are not about having off-site compute, storage and apps anymore. AI data centers are giant integrated computers. You could argue they are the infrastructure of intelligence.

And they need a crazy amount of parallel computing power with low latency. If you’re going to have an app in the cloud drive your car, it needs lots of computing power and no lag time.

In the West, NVIDIA is the “go to” GPU semiconductor and AI server rack. But due to US regulations, Huawei and most of China are pioneering an independent AI compute architecture. And the trick is to match NVIDIA-level compute performance without using NVIDIA GPUs, which are more powerful.

Huawei’s solution is to put together a lot more of its less powerful chips. So, the SuperPoD combines 8,192 of their Ascend 950DT NPUs.

- The SuperPoD has 64 NPUs in each cabinet and each SuperPod has 128 compute cabinets (plus 32 communication cabinets). So that’s 8,192 NPUs per SuperPoD.

- These Ascend 950DTs are 5nm/7nm-class transistors. Compared to NVIDIA Blackwell (B200) which is 4nm-class.

- The SuperPoD also uses different types of NPUs in its racks. Heterogenous computing is a big part of the strategy. The SuperPoD includes:

- Huawei’s 950DT, which is their high-performance chip. It is optimized for decode and training (hence “DT”).

- Huawei’s 950PR, which is more optimized for efficiency and inference. The PR stands for prefill and recommendation.

- A SuperPod configured for the highest performance would have 100% DT NPUs. Other configurations (say for live services requiring fast efficient inference) might have 70% DT and 30% PR.

Compare this approach to NVIDIA’s DGX SuperPOD (specifically the newer Blackwell and Vera Rubin architectures). It has 576 GPUs, compared to Huawei’s 8,192 NPUs.

The UnifiedBus: How Huawei Solves the 8,192 NPU Connection Problem

These AI data centers are integrated computers. And as you add more NPUs, how the NPUs connect to each other really matters. You can’t just keep expanding horizontally, with lots of additional routers and switches. Larger architectures mean more hierarchy, more delays and a larger physical space.

And this problem becomes more challenging when you have 14x the number of NPUs as NVIDIA.

- NVIDIA has 576 GPUs in 1-8 cabinets.

- Huawei’s SuperPoD has 8,192 NPUs in 160 cabinets. And that’s just for the SuperPod. In the SuperCluster, they are connecting +500,000 NPUs.

Huawei’s solution to this connection problem is to get rid of all the architecture and hierarchy. The SuperPoD is everything-to-everything computing. Every NPU connects directly with every other NPU.

Enter Huawei’s proprietary UnifiedBus (also known as “Lingqu”) optical interconnect.

Huawei’s UnifiedBus is designed to keep thousands of less-powerful chips in perfect sync across a giant room, overcoming physical distance and traditional data center bottlenecks.

This is what enables thousands of NPUs to function as a single computer. UnifiedBus (UB) is a single, unified interconnect protocol designed to replace multiple older, separate networking layers. While traditional data centers use one technology for talking (Ethernet/InfiniBand) and another for sharing memory (PCIe), Huawei’s UnifiedBus merges them into one optical fabric.

The UnifiedBus accomplishes two different things.

1. Direct Chip-to-Chip Interaction (i.e., a “Nervous System”)

In a normal data center, if Chip A in one cabinet needs to talk to Chip B in another cabinet, the data has to be packaged into a network format (like TCP/IP), sent through a switch, and unpacked at the other end. This creates a delay (i.e., latency).

- Huawei’s UnifiedBus removes the packaging step. It uses a peer-to-peer mesh where any chip can talk to any other chip directly. This is high-speed, peer-to-peer communication between NPUs. Instead of data traveling through several stops (CPU, Network Card, Switch), UnifiedBus creates a direct path.

- And this achieves 2-microsecond latency across a room the size of two basketball courts. This is fast enough for the 8,192 chips to stay in perfect sync during complex AI training.

2. Memory Pooling (i.e., a “Shared Brain”)

This is a really interesting part of the Atlas 950 and its Unified Bus. In traditional systems, each chip has its own local memory (HBM). If a chip runs out of space, it’s stuck.

- Huawei’s UnifiedBus supports “Memory Semantics.” This means the system treats the memory attached to all 8,192 chips as one single, big 1.1 Petabyte memory pool. To the software, it doesn’t look like a network transfer; it looks like the chip is just talking to its own local RAM.

- The Benefit: A developer doesn’t have to manually tell the system to “send data from Chip 1 to Chip 500.” They simply tell the software to “store this data,” and the system handles it across the entire pool. It behaves like one giant computer with a 1.1 PB hard drive that is as fast as local RAM.

How does it actually do this?

Ok. This part is just for those of you who really like this stuff. Feel free to jump to the next section otherwise.

Unified Bus is a combination of physical hardware innovation and a specialized software stack. The goal is to eliminate the standard bottlenecks in traditional data centers.

The Hardware Innovation is All-Optical Switching.

Traditional clusters use copper cables for short distances and standard optical cables for long distances. Huawei’s UnifiedBus replaces this with a specialized All-Optical Switching fabric. Because the Atlas 950 covers 1,000 square meters, electrical signals lose too much energy and speed. Huawei uses optical (light-based) interconnects to maintain speed over these long distances.

That means:

- Zero-Packet Loss: Traditional networking (like Ethernet) drops data packets when congested, causing delays. Huawei’s optical fabric is lossless, meaning data flows like water through a pipe without interruption.

- Nano-Second Fault Recovery: In a system with 8,192 chips, hardware failures are common. Huawei’s optical path can detect a fault and reroute data in 100 nanoseconds, so the AI training never stalls or crashes.

- Distance Correction: Because the system is spread across 1,000 m², the light signals are synchronized to ensure that data from a cabinet 50 meters away arrives at the exact same tick as data from a cabinet 5 meters away.

The Software Innovation is CANN 8.0.

This is the software layer that sits on top of the hardware. It’s Huawei’s version of CUDA. It has been updated to automatically “shred” the AI model into 8,192 pieces and tells the UnifiedBus exactly where to put each piece of data so the NPUs aren’t waiting on each other.

***

Ok. That’s it for UnifiedBus. Basically, NVIDIA is still the leader in NPUs. But Huawei is the leader in interconnect.

In Part 2, I’ll do the other part of the AI compute stuff, which is ecosystems and open source.

Cheers, Jeff

Here are some photos from the event.

———

Related articles:

- How Generative AI Services Are Disrupting Platform Business Models (2 of 2) (Tech Strategy – Daily Article)

- The Winners and Losers in ChatGPT (Tech Strategy – Daily Article)

- Why ChatGPT and Generative AI Are a Mortal Threat to Disney, Netflix and Most Hollywood Studios (Tech Strategy – Podcast 150)

From the Concept Library, concepts for this article are:

- AI Cloud

- Generative AI and Agents

- AI Infrastructure and Data Centers

From the Company Library, companies for this article are:

- Huawei

——–

I am a consultant and keynote speaker on how to increase digital growth and strengthen digital AI moats.

I am the founder of TechMoat Consulting, a consulting firm specialized in how to increase digital growth and strengthen digital AI moats. Get in touch here.

I write about digital growth and digital AI strategy. With 3 best selling books and +2.9M followers on LinkedIn. You can read my writing at the free email below.

Or read my Moats and Marathons book series, a framework for building and measuring competitive advantages in digital businesses.

This content (articles, podcasts, website info) is not investment, legal or tax advice. The information and opinions from me and any guests may be incorrect. The numbers and information may be wrong. The views expressed may no longer be relevant or accurate. This is not investment advice. Investing is risky. Do your own research.